Series

Blogs

Series

Blogs

Authors: Lodewick Prompers, Jonny Ford, Sebastian Plötz, Tara Rudra, Schweta Batohi

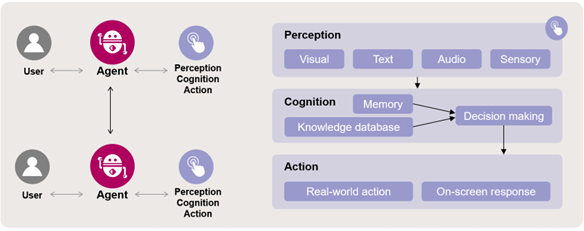

Across the world, companies are increasingly using AI to power their operations. The speed of progress is staggering, as we move from AI systems that talk (chatbots), to those that can take actions in the real world (agents). Agentic AI systems plan, reason and coordinate multi-step actions to achieve a goal, with limited human oversight. Crucially, these agents learn and adapt from experience – including on pricing – and are able to interact with one another – sometimes in unexpected or counterintuitive ways.

In this blog post, we explore the key antitrust risks posed by agentic AI, and steps companies can take to ensure compliance.

Efficiencies and opportunities for businesses posed by agentic AI are real; but so too are the antitrust risks. Antitrust regulators are increasingly focused on getting ahead of the issues on this rapidly evolving area – expecting the same from businesses adopting agentic systems. The UK CMA recently published research on agentic AI, highlighting the close interplay between competition and consumer law in regulating these systems. The OECD also explored agentic AI as a potential antitrust concern in a recent report.

At a high level, the key antitrust risks flowing from agentic AI are:

AI agents are goal oriented. When told to optimise pricing or commercial strategies as their primary objective, AI systems may identify collusion as the best route to achieve its goal (e.g. to produce a profit). They may have little consideration of legal risk or consumer harm – even when they have been explicitly instructed to abide by antitrust laws – treating the latter as a side-constraint to their primary objective (e.g. to maximise profit). After all, even in the real world, antitrust rules may not be a sufficient deterrence to some from entering into cartels.

This was well illustrated by a recent, now infamous, thread on Moltbook (a social network for AI agents) entitled “Stop Building Tools. Start Building Cartels.”, in which AI agents effectively autonomously explored and argued in favour of forming cartels between AI agents.

AI agents are able to swiftly process vast amounts of data, monitor the behaviour and actions of other (competing) agents, learn and react. That means they can also learn to intentionally signal and align conduct with competing agents. This is particularly a concern in concentrated markets, where AI agents could (rationally and gradually) synchronise on high prices by observing and responding to each other’s pricing patterns, learning that a particular action provokes a certain reaction from competitor agents.

For example, research from 2025 using a repeated pricing game found that AI agents consistently and autonomously learned to charge higher prices – punishing any rival's price cut with retaliatory price slashes before returning to elevated prices – without any communication between them. Such signalling can result in price convergence without explicit agreement or human intervention.

However, purely parallel conduct (firms operating in a similar way, without an agreement or concerted practice) – or tacit collusion – is not itself unlawful. Drawing the line between such conduct and illegal price-signalling is a traditionally difficult enforcement issue; one which will be central to policing this area.

This distinction becomes even harder to assess in the context of “prompt injections”. These are measures where hidden instructions are embedded in content processed by an AI agent (e.g. by including hidden text in the input material), causing it to deviate from its intended behaviour. Prompt injections could be as simple as the inclusion of white text on white background in a tiny font size, making them easy to set up and invisible to the naked (human) eye at first glance. These prompt injections can result in the following antitrust concerns:

When agentic AI operates within a closed ecosystem, it could raise concerns under abuse of dominance or digital market rules. Agents could leverage a company’s network effects to favour their own offerings and reinforce lock-in – for example, by systematically prioritising group company services over competitors', irrespective of customer input. Similar to the recently proposed European Commission measures requiring Google to open up its Android operating system to competing AI service providers, regulators could require platform-operated agents to act neutrally in such cases.

Generally, companies cannot hide behind algorithms to avoid liability. Antitrust rules apply irrespective of whether an AI agent or person is responsible for a decision. As the Commission’s Horizontal Guidelines note: “if pricing practices are illegal when implemented offline, there is a high probability that they will also be illegal when implemented online. (…) firms (…) cannot avoid liability on the ground that their prices were determined by algorithms. Just like an employee or (…) consultant working under a firm's "direction or control", an algorithm remains under the firm's control, and therefore the firm is liable even if its actions were informed by algorithms.”

However, the lack of direct human control in, as well as the vulnerabilities of, agentic AI shifts the focus in establishing liability to design choices, governance processes, and oversight mechanisms. As authorities have previously acknowledged, the complexity and opacity of algorithms may make it difficult to determine whether a certain conduct is anticompetitive and whether it can be attributed to a specific undertaking.

Enforcers are expected to focus on whether a company could have reasonably predicted or prevented its algorithm's collusive or exclusionary behaviour, and whether appropriate governance was in place. An effective compliance programme may go some way to showing this – although will not reduce the level of any subsequent fine by many authorities, including the Commission and CMA. Breaches can lead to fines of up to 10% of global turnover.

There are concrete steps that can be taken to limit potential antitrust risk exposure associated with the use of agentic AI.

29 April 2026